The Promise and Disappointment of Virtual Reality

A Cultural History of VR—And its Repeated Failure to Catch On

Imagine that you and a group of friends are walking through a tunnel, carrying a variety of objects. A backpack. A vase. A toy spaceship. The cardboard cut-out of a dragon. The tunnel broadens, passing through the centre of a large cavern. To your right is a large bonfire; to your left, the cave drops away into a dark pit. In this pit, a group of prisoners are chained to a wall, manacles around their ankles, necks and wrists. All they can see, all they have ever seen, are the flickering shadows thrown by the bonfire, projected onto the cave wall in front of them. “Then in every way, such prisoners would deem reality to be nothing else than the shadows of the artificial objects,” Socrates says in the “Allegory of the Cave,” depicted in Plato’s Republic.

He goes on to ruminate on the difficult process of freeing these prisoners and introducing them to reality. The first prisoner initially rejects what he is shown, but in time is able to comprehend the sun and perceive reality in its entirety. Exposed to the truth, the prisoner naturally desires to return to the cave—to free those still living a fake existence. Socrates warns of the reception this prisoner will face; the other prisoners know reality only as they have experienced it. Descending again, the returning prisoner’s eyes take some time to adjust to the darkness; to those who never left he appears damaged, no longer able to comprehend reality, an easy target for ridicule and scorn.

Debate around the meaning of Plato’s allegory centers on whether the story is about how we learn and understand things as individuals—what we perceive and know is not necessarily what others see or understand—or whether it is more political in nature: knowledge has the potential to transform our understanding of the world and the way we interact with it. For me, the two interpretations are not mutually exclusive.

Perhaps the most famous cultural reference to Plato’s Cave is Lana and Lilly Wachowski’s 1999 film The Matrix. Eighteen-year-old spoiler alert: the main character, Neo, a disgruntled corporate employee in a ubiquitous 90s cityscape, is shown that what he thinks is real is actually a virtual reality (VR) construct enslaving the majority of humanity, keeping people passive while the robot rulers of the future use them as batteries.

“You have to understand, most of these people are not ready to be unplugged,” the character Morpheus says, after he has freed Neo from the simulation and is inducting him on how to save the world. “Many of them are so inured, so helplessly dependent on the system, that they will fight to protect it.”

The Matrix emerged towards the end of a second wave of interest in VR as a physical technology, with the idea of a simulated world moving from philosophical concept to marketable commodity: the film was the fourth-highest grossing of 1999, the first DVD to sell more than three million copies in the United States, and a major influence on a generation of filmmakers.

The first VR wave came decades earlier, in the 1950s and 60s, as the world moved into a series of conflicts clouded by the threat of nuclear war and potential world annihilation. While some science fiction writers were writing post-apocalyptic dystopias—John Wyndham’s The Day of the Triffids and The Chrysalids, or Peter Matheson’s I Am Legend—others, like Isaac Asimov, Robert Heinlein, James Tiptree Jr, Arthur C. Clarke, Katherine MacLean, Ursula Le Guin and Phillip K. Dick, were imagining futures based on emerging technologies: telephones, computers, television sets, modems.

VR’s most famous early appearance came in Ray Bradbury’s 1953 novel Fahrenheit 451, set in a dystopian world where books are outlawed. The main desire of Mildred, wife of protagonist Guy Montag, is to get a fourth wall for her television so that she can achieve complete immersion in her favorite interactive TV program, The Family, in which people take on assigned roles in a fictional household. The novel reflects on the rise of McCarthyism, totalitarianism, and government overreach in the US, as well as the Cold War. Montag makes reference to Plato’s allegory, too: “Maybe the books can get us half out of the cave. They just might stop us from making the same damn insane mistakes!” In Montag’s world, as in Bradbury’s, the external forces of paranoid government and burgeoning capitalism were reshaping the reality of both the individual and the world at large.

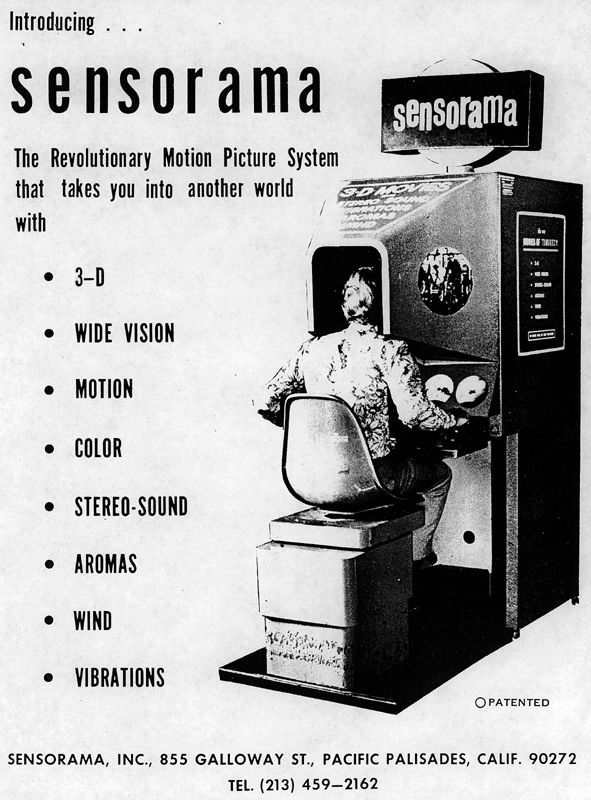

Technologically, the first VR device was Morton Heilig’s Sensorama—a “Revolutionary Motion Picture System that takes you into another world with 3-D, wide vision, motion, color, stereo-sound, aromas, wind, vibrations.” Heilig shot, produced, and edited the films himself. Titles included Motorcycle, Belly Dancer and I’m a Coca Cola Bottle. The machine had a bucket seat, handles, vents and a hooded canopy. In the end, the machinery was too complex and expensive, and Heilig failed to find investors; the Sensorama remained a prototype.

At the end of the 1960s, the first computerised VR devices appeared—interestingly, both were to do with aviation. Thomas A. Furness, III, an inventor, introduced the first flight simulator to the US Air Force in 1966. A couple of years later, Ivan Sutherland and Bob Sproull at Harvard University created the first VR head-mounted display (HMD)—nicknamed “The Sword of Damocles” because it was so large—which was designed to help helicopter pilots land at night.

When VR next starred in popular culture, it was the early 1980s, and mass consumerism, technology and popular culture walked hand-in-hand. The Star Wars and Star Trek franchises were the vanguard, taking science fiction and fantasy stories, ideas and culture out of the fringes and into the mainstream. The neoliberal economic policies of Reagan in the US, Thatcher in the UK, and later Douglas in New Zealand, and Hawke and Keating in Australia, pushed globalization and the pursuit of personal wealth into overdrive. Computers became smaller, faster, more affordable, and aimed more at personal fulfillment, while the end of the decade saw the introduction of the World Wide Web. The West’s cultural push towards individualization, along with the greater social freedoms won by the movements of the previous decades, began to open cracks in once tightly controlled, hierarchical and patriarchal systems like church groups, sporting teams and the family. Identity shifted from the community-based structures we were part of to the cultural products we consumed.

In the midst of this came William Gibson’s 1984 debut novel, Neuromancer, which won the Nebula, Hugo, and Philip K. Dick awards, an achievement described as being “the sci-fi writer’s version of winning the Goncourt, Booker, and Pulitzer prizes in the same year.” Popularizing the terms “cyberspace” and “the matrix,” the book is considered the archetypal cyberpunk work. Gibson created a near-future world of high technological and scientific advancement juxtaposed against crumbling social cohesion and the struggle to maintain a sense of self or meaning. Where mainstream science fiction had become the realm of grand galactic vistas and operatic space battles between the forces of good and evil, cyberpunk was urban, close and personal—with a style and tone reminiscent of film noir, where the boundary between right and wrong was as blurred as conceptions of what was real.

Neuromancer follows Case, a down on-his-luck hacker in Chiba City, Japan, who is enlisted by an artificial intelligence (AI) construct to help it break free from its human-imposed limits. The narrative switches between Case in the real world, a virtual reality world Case accesses via a jack in the back of his neck, and the perspective of “razorgirl” Molly Millions, which Case experiences via one of her many cybernetic modifications. As Case flickers between these three subjectivities, the AI attempts to distract him with worlds and scenarios that appeal to Case’s various psychological weaknesses, attempting to trap him within a virtual world by making it more attractive than the “real” one. Where the heroes and protagonists of mainstream science fiction played leading roles in the future direction of great social and political change, Case is more an antihero, manipulated into participating in the schemes, games, and power struggles of the powerful. Like Montag in Fahrenheit 451 pushing back futilely against the grinding machine of consumerism and a totalitarian state, like the masses of newly unemployed under Thatcher in Britain, Case struggles to avoid being crushed between colossal entities determined to maintain and cultivate more power at any cost.

It was around this time that French philosopher Jean Baudrillard produced his seminal work Simulacra and Simulation. Baudrillard begins this examination of reality by referencing a short fable by Argentinian writer Jorge Luis Borges called “On Exactitude in Science.” In the story, Borges describes the creation of a map within an empire that is so detailed, exact and accurate that it is the same size as the empire. Subsequent generations, however, are not as interested in cartography, so the map fades over time until all that is left are “Tattered Ruins . . . in the Deserts of the West.” According to Baudrillard, it is not the map that has faded but reality itself. From the pre-modern era through the industrial revolution to whatever stage of capitalism we are now in, according to Baudrillard we have lost the ability to recognize what is real. We are completely separated from the materials and processes that make up everything around us, everything we consume. Signs have gone from being faithful copies to representations with no originals. Our cultural and media institutions create realities and ways of being that are hyperreal, which we then replicate, buy into, and export as representations of our national and cultural identity.

“It is the real, and not the map, whose vestiges subsist here and there, in the deserts which are no longer those of the Empire, but our own,” Baudrillard theorizes. “The desert of the real itself.” (Simulacra and Simulation is the second major philosophical work paid homage to in The Matrix. After freeing Neo from the virtual reality prison, Morpheus takes him into another reality construct to introduce him to the “real world.” Sitting in a red leather armchair in the middle of a dark, desolate post-apocalyptic landscape, Morpheus spreads his arms wide. “Welcome to the desert of the real,” he says.)

“Our cultural and media institutions create realities and ways of being that are hyperreal, which we then replicate, buy into, and export.”

Throughout the 1990s, faster processing speeds, advancements in computer graphics, and the development of the Web brought the promise of VR closer to reality, a promise augmented by various representations in popular culture. Building on cyberpunk’s intersection of technology and identity, Neal Stephenson published the novel Snow Crash in 1992. The novel follows an Afro-Asian katana-wielding hacker, Hiro Protagonist, on a witty romp examining language, religion and globalization; it is also the book that popularized the word “avatar,” now the accepted term for online virtual bodies. That same year saw the release of Brett Leonard’s film The Lawnmower Man, in which Jobe Smith, a local greenkeeper living with an intellectual disability, develops telekinesis and pyrokinesis after a scientist boosts his intelligence via a combination of drugs and VR tech, a process previously trialled on chimpanzees. Later, David Cronenberg’s body-horror sci-fi romp eXistenZ—released, like The Matrix, in 1999—examined the shifting relationship between people and technology through the prism of characters playing multiple roles across different levels of a VR video game, eventually losing sight of where reality lies, or whether it even exists at all.

Meanwhile, in the real world, VR technology was advancing. Flight simulators had become entrenched in modern militaries, scientists were beginning to use VR to treat phobias and PTSD, and NASA developed a VR system to drive Mars rovers in real time. Yet once again, VR failed to take off commercially, despite the two major video game producers, Sega and Nintendo, both releasing commercial units.

This era was also the golden fifth generation of gaming, which included the introduction of the Sega Saturn, the Sony PlayStation and the Nintendo 64 consoles. The Atari 2600 and 5200 gaming systems of the late 1970s and early 80s had arrived at the perfect time to capitalise on emerging popular culture franchises, but the development of these 64-bit 3D consoles pushed gaming to a whole new level through advancements in CD-based storage and 3D graphics technology, the miniaturisation of hardware and the emergence of the networked possibilities of the internet.

In 1994 in the US, arcade games and home console game sales generated $7 billion and $6 billion respectively (compared to the $5 billion generated from cinema tickets).

Fast-forward two decades, and the spiral in gaming is unprecedented; in 2016, global gaming sales hit $90 billion—a 300% increase on 1994, with the growth largely driven by mobile and handheld gaming.

In one sense, the agency of players in videogames—via the ability to control characters on the screen—has prepared gamers for the next wave of commercialised VR.

The wave fully landed in 2016, with three major head-mounted displays released to market, priced at a relatively affordable $500 to $1000. At the lower end of the market, people can use their smartphones with headsets costing around 10 per cent of the price of the premium units. With over 2 billion smartphone users worldwide, including 15 million in Australia, accessing virtual reality is literally in almost everyone’s hands.

The core difference between the two main types of accessible VR devices lies in how much control the user can exert on the virtual environment. For smartphone units, users are largely restricted to what they can see: the realm of 360-degree video. A player can look all around their virtual self, but where the virtual self can go is dictated by the camera operator.

Using the same technology, news organizations are beginning to invest in VR reporting, recognizing the value and power of immersing a viewer inside an experience. In 2015, The New York Times launched NYT VR, which allows viewers to “experience” being embedded with Iraqi forces during a battle or going on a journey through the solar system. In 2016, Amnesty International’s 360 Syria VR experience depicted the devastation of the bombing of Aleppo; designed as a fundraising tool, it went onto win the Third Sector ‘Digital Innovation of the Year’ award. Premium VR units, on the other hand, provide more individual control over movement. On top of the video and audio immersion, handheld controllers allow players to interact with their environments: to walk along the edges of digital buildings, fly an X-wing fighter from inside the cockpit, and create three-dimensional artworks.

Despite the hype and variety of marketentry points, initial sales figures for the new wave of VR have so far been disappointing. It is possible that the world is not ready to embrace large-scale VR just yet.

Given some of the cultural touchstones observed here, that is probably not such a bad thing. The military–industrial complex has maintained its use of VR since those early days, and has co-opted many gaming developments into both its training and propaganda. US Marines used a modified version of Doom II to train new recruits, while Xbox games Full Spectrum Warrior and America’s Army are both openly used as military recruitment and training tools—reminiscent of the 1984 film The Last Starfighter, in which an American teenager is drafted into an intergalactic civil war after achieving the high score on his favorite arcade game. The development of first-person shooters, like the Battlefield, Rainbow Six, and Call of Duty franchises, has been heavily influenced by a desire for battlefield authenticity, with extensive research used not just to simulate past battles, but also to prophesy the future of war. It is eerily reminiscent of Orson Scott Card’s 1985 novel Ender’s Game, where (again, spoiler alert) a teenager thinks he is playing a video game in which he trains to fight an alien species; instead, after having won the game and destroyed the simulated alien homeworld, discovers that he has unknowingly been controlling actual soldiers.

In popular culture, of course, representations of VR remain prevalent. The fifth season of Netflix’s House of Cards includes the use of VR to treat PTSD. The overarching villains of the 2017 season of the BBC’s Doctor Who use a large-scale VR simulation of the history of the Earth and humanity to plan their attack. Meanwhile, in Ernest Cline’s 2011 novel Ready Player One, soon to be turned into a Spielberg film, education via VR is a given. At the start of the story, teenager Wade Watts is living in the American slums of the 21st century, a cityscape of mobile homes stacked into towers, the product of an era of mass migration towards the cities as economic inequality spiraled out of control. The only way that Wade and children like him can get an education is via the OASIS, a VR world created by an eccentric tech billionaire who made it free (to a degree) for people to access, ensuring that all children are given the console, headset and haptic gloves (which allow them to interact with and manipulate a virtual environment—the likely next stage in VR development) that are required to access one of the virtual schools.

Like much other speculative fiction, Ready Player One is as much a reflection of our present than anything else: there is the rich philanthropist as economic hero trope, the faceless corporate behemoth as villain, and the story itself centers on the desire of Wade and his friends to become something other than who they are, with VR creating the possibility of transcending their selves and creating new identities without what they deem to be imperfections, such as their wealth, race, gender, sexuality or appearance. For techno-utopians, the promise and attraction of VR is not just the reshaping of our own identities and potentials, but also the ability to shape those of others. Producer Chris Milk calls VR the “ultimate empathy machine,” and is effusive in a way that only TED talk participants can be, claiming VR connects humans to other humans in a profound way that I’ve never seen before in any other form of media. And it can change people’s perception of each other. And that’s how I think virtual reality has the potential to actually change the world.

Of course, whether it is using VR for the treatment of vertigo and PTSD, or drawing our attention to unconscious biases like racism, it is possible that VR does have the ability to change our perception of the world around us, that the knowledge we gain from this technology can be transformative.

But Plato’s Cave presupposes that those freeing the prisoner from their chains to reveal the true nature of “reality” are altruistic in their intent—that the world being shown the freed prisoners is indeed the truth. It is an allegory that does not allow for the world as it is today, or the pervasive desire to escape it.

The continued commercial failure of VR may represent an unconscious resistance to jettisoning our connection to the real. Maybe we are waiting for that blockbuster game to drive mass-market appeal. Perhaps the technology simply is not good enough yet to simulate a truly authentic—and profitable—experience. In this sense we are trapped. We crave authenticity of experience but, despite the efforts of philosophers, authors and auteurs, our imaginations appear limited to what we can individually consume and identify with. While capitalism lumbers on, we cannot see anything but the shadows on the wall.

__________________________________

This essay originally appears in Overland Literary Journal issue 228.

Mark Riboldi

Mark Riboldi is a writer, photographer and communications consultant living in Sydney. He can be found on Twitter at @markriboldi.