I saw the same therapist for nearly seven years while living in Brooklyn. Her office was in an old brownstone that was about a ten-minute walk from the subway. I’d speed-walk there, and sometimes, if she was running late or our session ran long, I would call a Lyft while she processed my payment, hoping to get back to work fast enough not to have to explain to my coworkers—or, god forbid, my boss—where I’d been.

Early on in our relationship, I struggled against her quiet, traditional approach. But I kept going back because I didn’t know how else to get out of my post-graduate school funk. And eventually I realized her mere stoic presence helped. It helped me feel less unmoored in my transition to working life; helped me work through worries related to getting married and changing jobs; helped me manage the anxiety that sometimes made it hard for me to navigate my life and relationships, let alone New York City.

She helped me decide (or, she would probably say, helped me realize that I had already decided) to move back home to North Carolina. When I left her brownstone for the last time, it felt like a mutual breakup, a tough-but-necessary separation with good memories and no hard feelings.

But the glow of being closer to family and away from the stresses of the city wore off after a few months. I was deep into writing my first book, about the impact of technology on empathy, and of empathy on the development of new technologies. I was writing every day about the importance of human contact, of having your feelings recognized, and of recognizing others’ feelings; but, especially without that biweekly visit to someone trained and paid to express empathy, I was feeling a dearth of it in my own life.

The prospect of finding a new therapist and starting over was daunting. I felt exhausted just thinking about “auditioning” more than one in an effort to find someone with whom I felt a connection, and the idea of rehashing all of my baggage about my “family of origin” and various traumas made me feel nauseous. I wished for a way to skip all of that and just get to the talking and figuring things out.

One day, while going through my research notes on the development of empathic technology—artificial intelligence that can learn to act like it has empathy—and the debate over whether that empathy is “real,” and whether it matters, the obvious answer struck me: maybe there was a bot for that.

*

That summer, I flew back to hot and sticky New York for a reporting trip. I was there for the 15th annual Games for Change Festival, which held a special day-long summit at the New School on the change-making potential of combined real-and-virtual experiences known as “extended reality,” or XR. A lot of these experiences claimed to be able to trigger and enhance empathy, and I wanted to see some proof.

Ellie spoke with a pleasant humanlike voice, more like a very calm cartoon character than an embodied Siri.

One of the presentations that day was from behavioral neuroscientist and medical tech developer Walter Greenleaf. His slides provided a whirlwind journey through uses of XR and virtual reality in medicine, including a “walk the plank” simulator meant to help with fear of heights and a swimming-with-dolphins experience that helps distract kids from uncomfortable medical procedures. Then, he played a short video of an interaction between a young man in a checkered shirt and a digital avatar with brown hair and a brown cardigan, sitting with legs crossed on a pink armchair. He said her name was Ellie.

I watched the split-screen demo from a seat in the half-packed auditorium. Ellie spoke with a pleasant humanlike voice, more like a very calm cartoon character than an embodied Siri. She made a little bit of small talk with the man, then explained her mission: “I was created to talk to people in a safe and secure environment. I’m not a therapist, but I’m here to learn about people, and would love to learn about you.” As the small talk continued, the side of the screen with the man’s face showed how technology called MultiSense was tracking his gaze, smile, which way he leaned, and the movements of his eyes.

Ellie asked where he was from.

“Los Angeles,” he said.

“Oh!” she responded brightly. “I’m from LA myself.”

I smirked a little, and a titter of laughter went through the crowd. The demo skipped ahead a bit, to Ellie asking the man when he had last been “really happy.” He hesitated and looked down as he struggled to answer. Words at the bottom of the screen noted that Ellie, whose technology is actually called SimSensei, was able to track the man’s gaze and respond to it.

“I noticed you were hesitant on that one,” she said, leaning forward to engage him and encourage him to say more.

I suddenly thought of my Brooklyn therapist, legs crossed in her own armchair, leaning forward, head bent slightly, silently encouraging me to go on. I was reminded of a concept I’d come across in my research on humanlike technology: the uncanny valley, coined by Tokyo Institute of Technology professor Masahiro Mori to refer to the way humans find robots with humanlike features appealing up to a point, then begin to recoil when they seem “too real.”

This felt to me like technology with empathy, even if the empathy wasn’t necessarily emanating from Ellie herself.

As she mentioned, Ellie was not meant to be a therapist. “She was an interviewer,” her creator, Albert “Skip” Rizzo, of the University of Southern California’s Institute for Creative Technologies, told me in a phone conversation later. He and his colleagues created her as part of a larger effort to quantify people’s behavior—specifically the behavior of members of the military, veterans, and their families—while they answered questions. Ellie used facial tracking to note markers of distress and symptom improvement, quantifying behaviors in a way that might complement a more traditional anxiety, depression, or PTSD screening tool.

And while she was imperfect, and even a little disconcerting, she seemed to work.

Research conducted by Rizzo and colleagues found that those who spoke to Ellie were more comfortable sharing their symptoms than they had been when filling out a paper assessment. Talking to Ellie seemed to put a distance between expressing emotions, experiences, and symptoms, and having to admit them to an authority. Participants seemed to appreciate the opportunity to open up without the potential consequences of doing so to a real person.

One of the researchers said at the time: “By receiving anonymous feedback from a virtual human interviewer that they are at risk for PTSD, they could be encouraged to seek help without having symptoms flagged on their military record.”

This felt to me like technology with empathy, even if the empathy wasn’t necessarily emanating from Ellie herself. It also felt like something I’d like to try.

*

When I got home from my reporting trip, I decided to try an app that connects users to licensed therapists via text, phone, or video. The phone and video options felt oddly too vulnerable for me; if I was going to try out a new therapist, I wanted to be able to get a sense of their entire presence, or none of it at all. Or so I thought.

I couldn’t connect with the woman the app assigned to me. Her language felt infantilizing, and she seemed to ignore the concerns I mentioned in favor of getting more of my backstory, but without explaining her methods. It didn’t last, but it pushed me to get back out into the real therapy world.

I’ve now found a flesh-and-blood therapist in North Carolina with whom I have a good rapport. She’s more expressive and collaborative than my Brooklyn therapist, and far more empathetic than the one I met through the app. I don’t believe any technology will be able to replace the therapeutic power of attentive silence or easeful body language, nor do I think it should. But as a bridge to getting real-life help, virtual assessment and counseling has far-reaching positive potential.

__________________________________

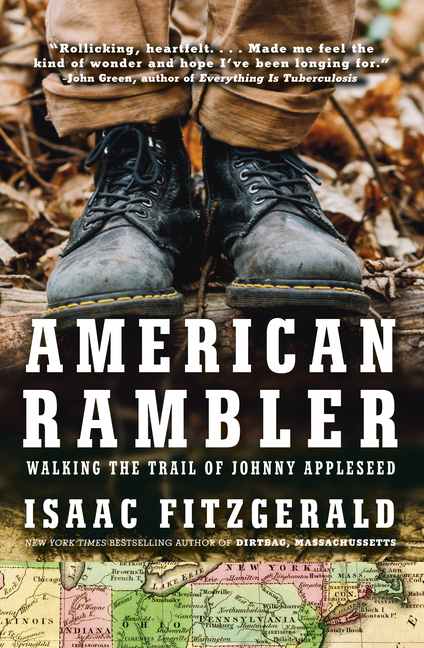

The Future of Feeling, by Kaitlin Ugolik Phillips, is available from Little A.

Kaitlin Ugolik Phillips

Kaitlin Ugolik Phillips is a journalist and editor who lives in Raleigh, North Carolina. Her writing on law, finance, health, and technology has appeared in the Establishment, VICE, Quartz, Institutional Investor magazine, Law360, Columbia Journalism Review, and Narratively, among others. She writes a blog and newsletter about empathy featuring reportage, essays, and interviews. For more information, visit www.kaitlinugolik.com. Her book The Future of Feeling is available from Little A.