What Discourse Regulation by Social Media Giants Means For Democratic Societies

Jamie Susskind on Free Speech and Disinformation in the Digital Age

The people of Britain are not known for excessive displays of emotion. When something dramatic happens, the British way is to take a sip of tea, think of the Queen and never speak of the matter again. Every once in a while, however, the country is bestirred from what Her Majesty has called its “quiet, good-humored resolve” into a frenzy of terrible rage.

One such incident took place in 2020.

Dominic Cummings was a principal aide to the prime minister and one of the architects of the UK’s strict Covid-19 lockdown, which forbade people even from attending the funerals of their loved ones. It was discovered that, at the height of the lockdown, Mr. Cummings took a 500-mile road trip in breach of the rules he had helped to write. Along the way, he visited a pretty tourist town, coincidentally on his wife’s birthday, which he maintained (some thought rather unconvincingly) was a medical expedition to “test” his “eyesight.”

Enraged by the hypocrisy, the British public took to social media and demanded his resignation. On Twitter the scandal soon had its own hashtag: #CummingsGate. But seasoned observers noticed something strange. Despite dominating the national conversation, the word ‘Cummings’ itself did not appear on Twitter’s trending topics. It was as if the issue was being hidden from public sight.

Why?

The answer had nothing to do with high politics. “CummingsGate” was censored because the word “cumming” was on Twitter’s roster of forbidden pornographic terms. A similar mistake occurred when Facebook challenged posts referring to “the Hoe”—not a term of misogynist abuse, but a reference to Plymouth Hoe, a scenic spot on the southwest coast of England. This type of error is known in computer science as the Scunthorpe Problem, named after the English town whose name conceals a very naughty word. See also—for educational purposes only—the quaint English settlements of Penistone, Cockermouth and Rimswell.

Those who design and control social media platforms set the rules of debate: what may be said, who may say it and how.

The Cummings affair demonstrated, in microcosm, all the comedy, tragedy and farce of online political discourse: the hum of intrigue, the sugar rush of viral outrage, the mountainous difficulty of moderating at scale, the whiff of conspiracy. It reminded us what we already know: that technology and democracy are now so interconnected that it is hard to tell where one ends and the other begins. Those who design and control social media platforms set the rules of debate: what may be said, who may say it and how.

*

There are many online spaces for citizens to engage in public discussion. On Facebook platforms alone, people post more than 100 billion times a day—more than 13 posts for every person on the planet. Platforms often claim that they are not in the business of creating the content that appears on their platforms, and that’s usually true. But it doesn’t mean they are neutral in public debate. Far from it. Instead of making arguments themselves, they rank, sort and order the ideas of others. They censor. They block. They boost. They silence. They decide who is seen and who remains hidden.

The simplest form of platform power is the ability to say no. With a click, any user—even a president—can be banned from a platform forever. Nearly 90 per cent of terms of service allow social media platforms to remove personal accounts without notice or appeal. Often the banished deserve their fate: Facebook removes billions of fake accounts every year, as well as countless fraudsters, predators and crooks. Donald Trump almost certainly deserved it when it happened to him. However, there but for the grace of Mark Zuckerberg go all of us. You or I could find ourselves shut out from a prominent forum for reasons we don’t fully understand. No law requires platforms to explain or justify their decisions. Even if you accept that they do not deliberately favor certain political views over others, because of the scale at which they operate, they sometimes make mistakes.

Sometimes platforms’ decisions about who not to exclude can be just as controversial as their decisions to throw users out. Facebook, for example, has been accused of letting powerful politicians in India breach its rules on fake news.

Platforms block content as well as people. Turkey requires Twitter to remove tweets that are critical of the country’s president. Facebook removes “blasphemous” content on behalf of the government of Pakistan and anti-royal content on behalf of the government of Thailand. TikTok censors content mentioning Tibetan independence and Tiananmen Square. The EU prods platforms to take down hate speech. Often, what seems like pure corporate power is a mix of commercial and government forces, interacting in ways that are opaque to ordinary users.

We might reasonably ask whether any private company should be making that kind of decision, on a regular basis, all by itself.

Sometimes platforms remove material because it is unlawful. But often they go further. In Turkey, TikTok banned depictions of alcohol consumption even though drinking alcohol is legal there. It also prohibited depictions of homosexuality to a far greater extent than required by local law. Ravelry, a platform used by nearly 10 million knitting and crocheting enthusiasts, forbids (lawful) images that are supportive of Donald Trump. A photograph of knitwear emblazoned with “Keep America Great” will be removed by the site’s moderators. But garments protesting Mr Trump’s “grab ’em by the pussy” remark (known as “pussy hats”) are actively encouraged.

When social media platforms permit or remove certain forms of legal expression, they make contestable judgements about the nature of free expression. Instagram’s community guidelines, for instance, contain a general prohibition on the display of female nipples. Rihanna, Miley Cyrus and Chrissy Teigen have all had photographs removed for breaching this rule. But if their snaps had been of breastfeeding or post-mastectomy scarring, then Instagram would have allowed them. And if the off ending areas had been pasted over with images of male nipples, that would also have been acceptable. You may agree or disagree with Instagram’s approach to this issue, but either way, the platform’s 500 million users have little choice but to abide by its judgment about what flesh should be seen and what should remain hidden.

The same goes for political speech more generally. During the Covid-19 crisis, Twitter removed tweets from the Presidents of Brazil and Venezuela that endorsed quack remedies for the virus. Twitter suspended Donald Trump from its platform permanently. Some supported these moves while others were uncomfortable about a private company censoring heads of state. It has been reasonably pointed out that other controversial political figures, like Iran’s Ayatollah Khamenei—who believes that gender equality is a Zionist plot to corrupt women—are still merrily tweeting away.

*

Political advertising is one of the most important and sensitive forms of speech. In the US, platforms essentially decide for themselves what should be allowed. Twitter and TikTok have banned political advertisements altogether. Snapchat permits them but only if they are fact-checked. Google allows them without any fact-checking at all. Facebook allows political advertisements and will fact-check them too, unless they are made by politicians, in which case they are left alone. In 2019, Donald Trump launched a Facebook advertisement that falsely claimed that Joe Biden had offered Ukrainian officials a bribe. The clip was edited to give the misleading impression that Mr. Biden had confessed to this nonexistent crime. It was seen by more than four million people.

When Mark Zuckerberg launched Facebook in his sweaty college dorm, he probably never imagined that he might eventually have to regulate the falsehoods of the President of the United States. But we might reasonably ask whether any private company should be making that kind of decision, on a regular basis, all by itself. Political advertisements on the television are subject to robust regulation. Why should online advertisements be treated differently?

“Which kinds of speech should be permitted and which should be prohibited?” is a big philosophical question. As we will see, democratic societies offer different answers. The strange reality of the current era, however, is that philosophy and tradition matter less than the opinions of the lawyers and business executives who make the decisions.

Frustratingly, social media platforms often seem to be making up their ramshackle philosophies as they go along. Mr. Dorsey, Twitter’s founder, says that his platform was founded “without a plan” and “built incentives into the app that encouraged users and media outlets to write tweets…that appealed to sensationalism instead of accuracy.” In 2021, the Wall Street Journal revealed that Facebook had “whitelisted” certain celebrities and politicians, allowing them to violate the platform’s rules without facing sanctions. The Brazilian footballer Neymar, for instance, was apparently permitted to post nude pictures of a woman who had accused him of rape.

In 1922, the authoritarian jurist Carl Schmitt wrote, “Sovereign is he who decides on the exception.” The platforms decide the rules and they decide the exceptions, often in the heat of a scandal. But knee-jerk crisis management is no way to regulate the deliberation of a free people.

__________________________________

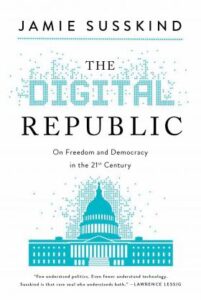

Excerpted from The Digital Republic: On Freedom and Democracy in the 21st Century by Jamie Susskind. Copyright © 2022. Available from Pegasus Books.

Jamie Susskind

Jamie Susskind is a barrister and the author of the award-winning bestseller Future Politics: Living Together in a World Transformed by Tech (Oxford University Press, 2018), which received the Estoril Global Issues Distinguished Book Prize 2019, and was an Evening Standard and Prospect Book of the Year. He has fellowships at Harvard and Cambridge and currently lives in London.