How Section 230 Shields Platforms from Accountability for What Their Users Post

Eric Berkowitz on the Evolution of Free Speech on the Internet

Nowhere is freedom of expression as safeguarded as in the US, and nowhere has online speech been more freewheeling. The First Amendment was already a high barrier against most government incursions, but in 1996, Congress built another rampart with Section 230 of the Communications Decency Act, a statute giving Internet companies and social media platforms additional and sometimes overlapping protections against liability. The law immunizes website operators from lawsuits for ads and most user-generated content, from political diatribes and videos of police brutality to vicious reviews of restaurants and plumbers.

Thanks to Section 230, platforms can moderate their sites without the risk of being called to account for their users’ and advertisers’ false, hateful, or defamatory posts, and also amplify or take down posts, or even terminate user accounts, without legal exposure.

Section 230 is one of history’s most significant enablers of speech, and like speech itself it is a mixed bag. Under its protection has emerged a social media environment reflective of the best and worst of its billions of inhabitants, and brimming with all the hatred, humor, creativity, and lies they can muster. The resulting “verbal cacophony,” raised to an earsplitting volume by the platforms’ division-driving algorithms, supercharges partisan political voices and infuriates the targets of online attacks.

Democrats say the law helps to spread hate speech and right-wing disinformation and want more content taken down; Republicans argue it allows liberal-leaning platforms to “censor” conservative viewpoints and want less content removed. While proposals are bubbling up to eliminate or modify the law’s liability shield, a divided Congress and the president must still agree on how, which is uncertain in the short term. For the present, American Internet censorship will continue to result less from legal constraints than from the individual platforms’ patchwork of evolving, conflicting, and inconsistently enforced content moderation policies.

Section 230 was spurred in part by a crooked financial firm, Stratton Oakmont (made famous by its founder’s memoir, The Wolf of Wall Street, and Martin Scorsese’s eponymous film adaptation). In 1995, Stratton sued a now-forgotten Internet service provider (ISP), Prodigy, for libel for anonymous messages posted on one of its boards that accused Stratton of bad behavior. In ruling against Prodigy, a New York court held that because the ISP moderated its users’ postings and had deleted other posts it found inappropriate, it was acting as a “publisher” of the accusations, and as such had legal exposure for libels against Stratton.

Congress—intent on fostering a “vibrant and competitive free market” for the Internet “unfettered by . . . regulation,” and worried that rulings such as the one against Prodigy would create a disincentive for the nascent industry to remove harmful content—adopted Section 230.76 Under cover of this statute, sites have been able to manage online operations without having to evaluate every one of the countless posts that appear each day, while at the same time amplifying and profiting from those posts, even the hateful and obnoxious ones, with strategic ad placements.

Section 230 is one of history’s most significant enablers of speech, and like speech itself it is a mixed bag.

The courts have applied Section 230 widely, striking down challenges both when sites remove user content and when they leave it up. In 2019, for example, a federal appellate court in New York held that the law even bars claims of civil terrorism. Some Israeli victims of attacks by Hamas (designated a terrorist organization in the US) alleged that Facebook had enabled the aggression by providing Hamas with a platform to promote terrorism around the world. The Court came down squarely for Facebook. In its view, providing “communications services” to Hamas, as Facebook had done, “falls within the heartland” of Section 230’s protections. “So, too,” the court held, “does Facebook’s alleged failure to delete content from Hamas members’ Facebook pages.”

Put another way, Facebook was free to take down such content, but it bore no responsibility for choosing not to. Another court invoked the law in 2020 to dismiss Congressman Devin Nunes’s $250 million lawsuit against Twitter, which was based on some parody accounts ridiculing him that had been posted by a “cow.” Nunes accused Twitter of keeping the accounts up to harm conservatives such as himself, but the court said the platform’s alleged political bias was irrelevant. Section 230 immunizes Twitter from exposure for what its users post, even from degradations coming from an account purportedly managed by a farm animal.

Section 230’s chief beneficiary to date has been Donald Trump, who built his political career on Twitter’s and Facebook’s willingness to carry his false and defamatory messages—something they never would have done without the immunity granted by the law. Yet, days after Twitter added fact-checking labels to some of Trump’s May 2020 messages attacking mail-in voting, Trump turned on Twitter, and social media generally, with an executive order taking direct aim at Section 230.

The order is largely a stream-of-consciousness mish-mash of complaints about anti-conservative online bias, and its proposals were widely ridiculed by legal scholars as untenable. But like other Trump attacks on the media, legal effectiveness wasn’t the real goal. The order was clearly meant to intimidate social media companies into refraining from interfering with his election-year communications strategy. (Twitter and Facebook continued labeling Trump’s false pre-election posts about voting, although they did so slowly and sporadically.)

The executive order also spurred a raft of legislative and other proposals to modify or reduce Section 230’s protections. Which of them, if any, will become law is impossible to say; nor is it likely that the more aggressive ones would survive First Amendment challenges. But whether Section 230 is “doomed,” as law professor Eric Goldman said in June 2020, or will merely be tweaked, it appears that what some call the Wild Wild Web may already be taming somewhat, even without legislative action.

Within two days in late June 2020, the usually undisciplined Reddit banned thousands of forums for hate speech, Amazon-owned Twitch suspended Trump’s official account for hateful conduct, and YouTube banned a number of far-right political figures.

Many of the attacks on the social media platforms’ Section 230 immunity appear to be displaced complaints about the weakness of US political institutions generally. Never before has a president been such a source of division and misinformation. As there was no way to effectively call him to account for such speech, the impulse is to attack the channels through which Trump and similar divisive figures communicate. In a well-functioning political system, we would not get to the point where the platforms were forced to act as guardrails of unmoored government actors.

The platforms share responsibility for the profusion of online misinformation and hatred, but it is a mistake to cast them as democracy’s last line of defense. As the tech writer Casey Newton observed, “Trump is a problem the platforms can’t solve.”

The Constitution raises its own formidable shield against content interference on the Internet. The First Amendment bars the government from censoring most speech of private citizens, but it also allows private companies to enforce their own speech restrictions. Like private schools, malls, or newspapers, Internet platforms are free to set their own rules on what may be said, amplified, or deleted. The platforms’ efforts to represent themselves as free speech champions make for good public relations, but they are not such champions and have no obligation to be.

Hundreds of millions of times each year, their employees and algorithms muscle speech around, promoting preferred voices and burying others. Individual users who post are thus protected against censorship by the government, but not by the platforms themselves. By the same token, the platforms are constitutionally immune from government meddling if what they are doing can be classified as speech, and what constitutes speech in this context can be surprisingly broad.

In 2014, a federal district court characterized the burying of search results by a search engine as protected political speech under the First Amendment. The case was brought by a group of pro-democracy advocates against Baidu.com, the giant Chinese search engine, for blocking pro-democracy messages, videos, and similar content from its search results.

The court concluded that Baidu’s algorithm, which was evidently set to remove such materials on instructions from the Chinese government, constituted an editorial judgment about which political ideas to promote, which the Constitution protects. Another federal district court held that Google’s ranking of search results is First Amendment speech, and so neither the government nor the courts can question how it, or other search engines, goes about doing so: “PageRanks are opinions—opinions of the significance of particular web sites as they correspond to a search query.”

The First Amendment also bars government officials from censoring private citizens on their own websites. As discussed earlier, Donald Trump learned this when the courts stopped him from blocking people on his Twitter account. They were unmoved by Trump’s assertion that he held the account in his personal capacity. As the account was used, it was a designated public forum, so Trump could not suppress unwelcome viewpoints on it. Trump is not alone in using social media accounts for political purposes and then blocking critical views. His fellow Republican, Governor Larry Hogan of Maryland, was forced to stop blocking and deleting criticisms on his social media accounts; liberal congressperson Alexandria Ocasio-Cortez has been sued for doing the same thing.

The rulings just discussed did not come from the Supreme Court, and First Amendment law is nothing if not dynamic. Until Congress and the court weigh in on the multiple ongoing issues raised by censorship on the Internet, there will be many open questions. But for now, the dual protections of the First Amendment and Section 230 of the Communications Decency Act have fostered the world’s most vibrant, if often chaotic and misinformation-saturated, online speech environment. Nothing on the Internet exists in isolation, however, and the US is not immune to the much more intrusive approaches of other jurisdictions.

______________________________________

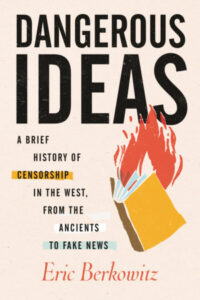

Excerpted from Dangerous Ideas: A Brief History of Censorship in the West, from the Ancients to Fake News Eric Berkowitz (Beacon Press, 2021). Reprinted with permission from Beacon Press.

Eric Berkowitz

Eric Berkowitz is a writer, lawyer, and journalist. For more than 20 years, he practiced intellectual property and business litigation law in Los Angeles. Berkowitz has published widely throughout his career, and his writing has appeared in periodicals such as the New York Times, the Washington Post, The Economist, the Los Angeles Times, and LA Weekly. His previous books include Sex and Punishment and The Boundaries of Desire. Dangerous Ideas is his latest book. He lives in San Francisco.