Grammarly pulled its weird AI feature impersonating writers without permission.

Grammarly has quickly rolled back a controversial software feature after intense backlash. The short-lived feature was called “Expert Review,” and offered AI-generated writing advice delivered via sock puppet versions of living and dead writers, all created without their permission. The anger inspired by these “Experts” forced the company to back down this week.

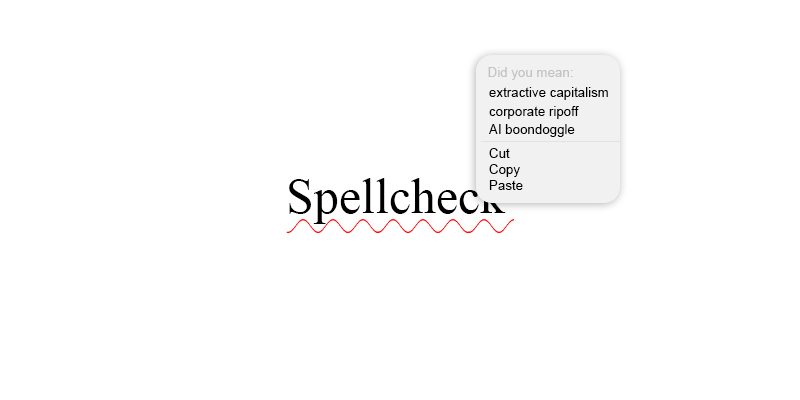

Grammarly used to be a sort of omnipresent spellcheck that followed you around your web browser, but has recently been much more invested in adding tons of AI products to mediate writing. As Miles Klee wrote in Wired, new features include a “chatbot that will answer specific questions as you compose a draft, a “paraphraser” feature that suggests changes in style, a “humanizer” that revises according to a selected voice, an AI grader that predicts how your document would score as college coursework, and even tools for flagging and tweaking phrases commonly produced by large language models.”

It amounts to a completely overwhelming fleet of intrusive robots, like if a bunch of Microsoft Clippys got Keanu Reeves powers to manipulate the Matrix, and then used them to enter your Google Docs and delete all your adverbs.

“Expert Reviews” were the most specific of these tools, framed as bespoke writing coaches. Jen Dakin, communications at Grammarly’s mother company Superhuman, described the “Experts” as a process where their software scans your writing “and leverages our underlying LLM to surface expert content that can help the document’s author shape their work.” This software conveniently doesn’t “claim endorsement or direct participation from those experts,” she said, rather “it provides suggestions inspired by works of experts and points users toward influential voices whose scholarship they can then explore more deeply.” I don’t buy that Grammarly is trying to get writers to click away from their platform to connect with other writing, but okay.

Grammarly never got permission from the people they chose to hang from their marionette strings. Their expert avatars included living and dead writers like Stephen King, Neil deGrasse Tyson, and Carl Sagan, but their scraping was incredibly broad reaching and hoovered up more than just the big names. The Verge reported that a number of editors from their masthead appeared without permission, and more depressingly, Vanessa Heggie, a professor at the University of Birmingham, posted on Linkedin about how fellow academic David Abulafia was included too. What Heggie found “obscene” was that Abulafia died in January, which didn’t stop Grammarly from using his work, name, and reputation in “creating little LLMs.”

AI companies increasingly work on a model of non-consent. Their assumption is that everything in existence can be shredded and fed into their software, which puts the onus on the rest of us to act as unpaid regulators.

And there’s something offensively tawdry that all of this is in mere service of extracting rent. There’s nothing being created here. I was a kid when the coolest a person could be was wearing American Apparel on stage next to Girl Talk’s laptop, so I get the appeal of remixing. But a private company that’s attracting $100+ million VC checks and using it to plunder the work and likenesses of public writers and academics is not a cool post modern pastiche—it’s a rip off.

James Folta

James Folta is a writer and the managing editor of Points in Case. He co-writes the weekly Newsletter of Humorous Writing. More at www.jamesfolta.com or at jfolta[at]lithub[dot]com.