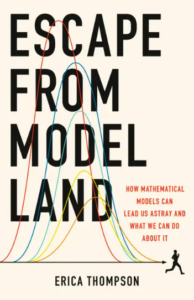

Why Is a Raven Like a Writing-Desk? On the Uses and Limitations of Mathematical Models

Erica Thompson Considers the Metaphors of the Numerical World

“Have you guessed the riddle yet?” the Hatter said, turning to Alice again.

“No, I give it up,” Alice replied: “What’s the answer?”

“I haven’t the slightest idea,” said the Hatter.

–Lewis Carroll, Alice’s Adventures in Wonderland (1865)

*Article continues after advertisement

Lewis Carroll had no particular answer in mind to the Mad Hatter’s riddle—”Why is a raven like a writing-desk?”—when he wrote Alice’s Adventures in Wonderland, but it has vexed readers for years. Many have come up with their own answers, such as “One is good for writing books and the other for biting rooks.”

Presented with any two objects or concepts, more or less randomly chosen, the human mind is remarkably good at coming up with ways to identify the similarities between them, despite all the other ways in which they might differ. Internet “memes,” for example, are small instances of shared metaphors which rely on pre-existing structures (the picture, which creates a framework story) to be loaded with a new meaning by the overlaid text which identifies something else as being the subject of the metaphor.

To take an example that has been around for a long time: the image of Boromir (Sean Bean) from Peter Jackson’s 2001 film The Fellowship of the Ring saying, “One does not simply walk into Mordor.” This meme has been repurposed many times to imply that someone is failing to take a difficult task seriously. Other memes include ‘Distracted Boyfriend’ (one thing superseded by another), “Picard Facepalm” (you did something predictably silly), “Running Away Balloon” (being held back from achieving an objective) and “This Is Fine” (denial of a bad situation). This capacity for metaphor, and elaboration of the metaphor to generate insight or amusement, is what underlies our propensity for model-building. When you create a metaphor, or model, or meme, you are reframing a situation from a new perspective, emphasizing one aspect of it and playing down others.

Why is a computational model akin to the Earth’s climate? What does a Jane Austen novel have to tell us about human relationships today? In what respects is an Ordnance Survey map like the topography of the Lake District? In what way does a Picasso painting resemble its subject? How is a dynamic-stochastic general equilibrium model like the economy?

These are all models, all useful and at the same time all fallible and all limited. If we rely on Jane Austen alone to inform our dating habits in the twenty-first century, we may be as surprised by the outcome as if we use an Ordnance Survey map to attempt to paint a picture of Scafell Pike or a dynamic-stochastic general equilibrium model to predict a financial crisis. In some ways these models can be useful; in other ways they may be completely uninformative; in yet other ways they could be dangerously misleading. What does it mean, then, to make a model? Why is a raven like a writing-desk?

Creating new metaphors

In one view, the act of modeling is just an assertion that A (a thing we are interested in) is like B (something else of which we already have some kind of understanding). This is exactly the process of creating a metaphor: “you are a gem”; “he is a thorn in my side”; “all the world’s a stage,” etc. The choice of B is essentially unconstrained—we could even imagine a random-metaphor generator which chooses completely randomly from a list of objects or concepts. Then, having generated some sentence like “The British economy in 2030 will be a filament lightbulb” or “My present housing situation is an organic avocado,” we could start to think about the ways in which that might be insightful, an aid to thinking, amusing or totally useless.

Even though B might be random, A is not: there is little interest in the metaphor sparked by a generic random sentence “A filament lightbulb is an organic avocado.” We choose A precisely because we are interested in understanding its qualities further, not by measuring it or observing it more closely, but simply by rethinking its nature by reframing it in our own mind and reimagining its relationship with other entities.

This is closely linked to artistic metaphor. Why does a portrait represent a certain person or a painting a particular landscape? If for some viewers there is immediate visual recognition, for others it may be only a title or caption that assigns this meaning. Furthermore, some meanings may be assigned by the beholder that were not intended by the artist. The process of rethinking and reimagining is conceived by the artist (modeler), stimulated by the art (the model) and ultimately interpreted by an audience (which may include the artist or modeler).

In brief, I think that the question of what models are, how they should be interpreted and what they can do for us actually has a lot to do with the question of what art is, how it can be interpreted and what it can do for us.

Of course, just as some artworks are directly and recognizably representative of their subject, so are some models. Where we have a sufficient quantity of data, we can compare the outputs of the model directly with observation and thus state whether our model is lifelike or not. But this is still limited: even though we may be able to say that a photo is a very good two-dimensional representation of my father’s face, it does not look a great deal like him from the back, nor does it have any opinions about my lifestyle choices or any preferences about what to have for dinner.

Even restricted to the two-dimensional appearance, are we most interested in the outline and contours of the face, or in the accurate representation of color, or in the portrayal of the spirit or personality of the individual? That probably depends on whether it is a photograph for a family album, a passport photo or a submission for a photography competition.

If we rely on Jane Austen alone to inform our dating habits in the twenty-first century, we may be as surprised by the outcome as if we use an Ordnance Survey map to attempt to paint a picture of Scafell Pike.

So the question “how good is B as a model for A” has lots of answers. In ideal circumstances, we really can narrow A down to one numerical scale with a well-defined, observable, correct answer, such as the daily depth of water in my rain gauge to the nearest millimeter. Then my model based on tomorrow’s weather forecast will sometimes get the right answer and sometimes the wrong answer. It’s clear that a right answer is a right answer, but how should I deal with the wrong answers?

• Should one wrong answer invalidate the model despite 364 correct days, since the model has been shown to be “false”?

• Or, if it is right 364 days out of 365, should we say that it is “nearly perfect”?

• Does it matter if the day that was wrong was a normal day or if it contained a freak hurricane of a kind that had never before struck the area where I live?

• Does it matter whether the day that it was wrong it was only out by 1mm, or it forecast zero rain when 30mm fell?

As with the example of the photograph, the question of how good the model is will have different answers for the same model depending on what I want to do with it. If I am a gardener, then I am probably most interested in the overall amount of rain over a period of time, but the exact quantity on any given day is unimportant. If I am attempting to set a world record for outdoor origami, then the difference between 0mm and 1mm is critical, but I don’t care about the difference between 3mm and 20mm. If I am interested in planning and implementing flood defense strategies for the region, I may be uninterested in almost all forecasts except those where extremely high rainfall was predicted (regardless of whether it was observed) and those where extremely high rainfall was observed (regardless of whether it was predicted).

There are many different approaches to understanding and quantifying the performance of a model, and we will return to these later. For now, it’s enough to note that the evaluation of a model’s performance is not a property solely of the model and the data—it is always dependent on the purpose to which we wish to put it.

Taking a model literally is not taking a model seriously

To treat the works of Jane Austen as if they reflected the literal truth of goings-on in English society in the eighteenth century would be not to take her seriously. If the novels were simple statements about who did what in a particular situation, they would not have the universality or broader “truth” that readers find in her works and which make them worthy of returning to as social commentary still relevant today.

Models can be both right, in the sense of expressing a way of thinking about a situation that can generate insight, and at the same time wrong—factually incorrect. Atoms do not consist of little balls orbiting a nucleus, and yet it can be helpful to imagine that they do. Viruses do not jump randomly between people at a party, but it may be useful to think of them doing just that. The wave and particle duality of light even provides an example where we can perfectly seriously hold two contradictory models in our head at once, each of which expresses some useful and predictive characteristics of “the photon.”

Nobel Prize-winning economist Peter Diamond said in his Nobel lecture that “to me, taking a model literally is not taking a model seriously.” There are different ways to avoid taking models literally. We do not take either wave or particle theories of light literally, but we do take them both seriously. In economics, some use is made of what are called stylized facts: general principles that are known not to be true in detail but that describe some underlying observed or expected regularity. Examples of stylized fact are “per-capita economic output (generally) grows over time,” or “people who go to university (generally) earn more,” or “in the UK it is (generally) warmer in May than in November.” These stylized facts do not purport to be explanations or to suggest causation, only correlation.

Stylized facts are perhaps most like cartoons or caricatures, where some recognizable feature is overemphasized beyond the lifelike in order to capture some unique aspect that differentiates one particular individual from most others. Political cartoonists pick out prominent features such as ears (Barack Obama) or hair (Donald Trump) to construct a grotesque but immediately recognizable caricature of the subject. These features can even become detached from the individual to take on a symbolic life of their own or to animate other objects with the same persona.

Models can be both right, in the sense of expressing a way of thinking about a situation that can generate insight, and at the same time wrong—factually incorrect.

We might think of models as being caricatures in the same sense. Inevitably, they emphasize the importance of certain kinds of feature—perhaps those that are the most superficially recognizable—and ignore others entirely. A one-dimensional energy balance model of the atmosphere is a kind of caricature sketch of the complexity of the real atmosphere. Yet despite its evident oversimplification it can nevertheless generate certain useful insights and form a good starting point for discussion about what additional complexity can offer. Reducing something or someone to a caricature, however, can be done in different ways—as the most-loved and most-hated political figures can certainly attest. It is effectively a form of stereotype and calls to the same kind of psychological need for simplicity, while introducing subjective elements that draw upon deep shared perspectives of the author and their intended audience.

Thinking of models as caricatures helps us to understand how they both generate and help to illustrate, communicate and share insights. Thinking of models as stereotypes hints at the more negative aspects of this dynamic: in constructing this stereotype, what implicit value judgements are being made? Is my one-dimensional energy balance model of the atmosphere really “just” a model of the atmosphere, or is it (in this context) also asserting and reinforcing the primacy of the mathematical and physical sciences as the only meaningful point of reference for climate change?

In the past I have presented it myself as “the simplest possible model of climate change,” but I am increasingly concerned that such framings can be deeply counterproductive. What other “simplest possible models” might I imagine, if I had a mind that was less encumbered by the mathematical language and hierarchical nature of the physical sciences? Who might recognize and run with the insights that those models could generate?

Nigerian writer Chimamanda Ngozi Adichie points out “the danger of the single story”: if you have only one story, then you are at risk of your thinking becoming trapped in a stereotype. A single model is a single story about the world, although it might be written in equations instead of in flowery prose. Adichie says: “It is impossible to talk about the single story without talking about power… How they are told, who tells them, when they’re told, how many stories are told, are really dependent on power.”

Since each model represents only one perspective, there are infinitely many different models for any given situation. In the very simplest of mathematically ideal cases of the kind you might find on a high-school mathematics exam, we could all agree that force equals mass times acceleration is the “correct model” to use to solve the question of when the truck will reach 60mph, but in the real world there are always secondary considerations about wind speed, the shape of the truck, the legal speed restrictions, the state of mind of the driver, the age of the tires and so on. You might be thinking that clearly there is one best model to use here, and the other considerations about wind speed and so on are only secondary; in short, any reasonable person would agree with you about the first-order model.

In doing so, you would be defining “reasonable people” as those who agree with you and taking a rather Platonic view that the mathematically ideal situation is closer to the “truth” than the messy reality (in which, by the way, a speed limiter kicked in at 55mph). This is close to an idea that historians of science Lorraine Daston and Peter Galison have outlined, that objectivity, as a social expectation of scientific practice, essentially just means conforming to a certain current set of community standards. When the models fail, you will say that it was a special situation that couldn’t have been predicted, but force equals mass times acceleration itself is only correct in a very special set of circumstances. Nancy Cartwright, an American philosopher of science, says that the laws of physics, when they apply, apply only ceteris paribus—with all other things remaining equal. In Model Land, we can make sure that happens. In the real world, though, the ceteris very rarely stay paribus.

_________________________________

Excerpted from Escape from Model Land: How Mathematical Models Can Lead Us Astray and What We Can Do About It, by Erica Thompson. Copyright © 2022. Available from Basic Books, an imprint of Hachette Book Group, Inc.

Erica Thompson

Erica Thompson is a senior policy fellow at the London School of Economics’ Data Science Institute and a fellow of the London Mathematical Laboratory. With a PhD from Imperial College, she has recently worked on the limitations of models of COVID-19 spread, humanitarian crises, and climate change. She lives in West Wales.